It’s the data, stupid.

Today’s frontier models (with open source models not far behind) are known to effectively be the product of their training set. Better (and more) data = better (and bigger) models. The data is the code. And you’ll note that even open source models don’t tend to share their training set, because it’s dirty. Unlicensed data. The copyright showdowns are coming.

And the data isn’t good enough to take these agents to the next level of being genuinely useful, agentic, and above all else, reliable. We’ve moved from prompt engineering to context engineering because the type of data that these models have been trained on doesn’t get the job done, not without a lot of the hand-holding being poured into agent harnesses these days. Memory and context management stand out especially.

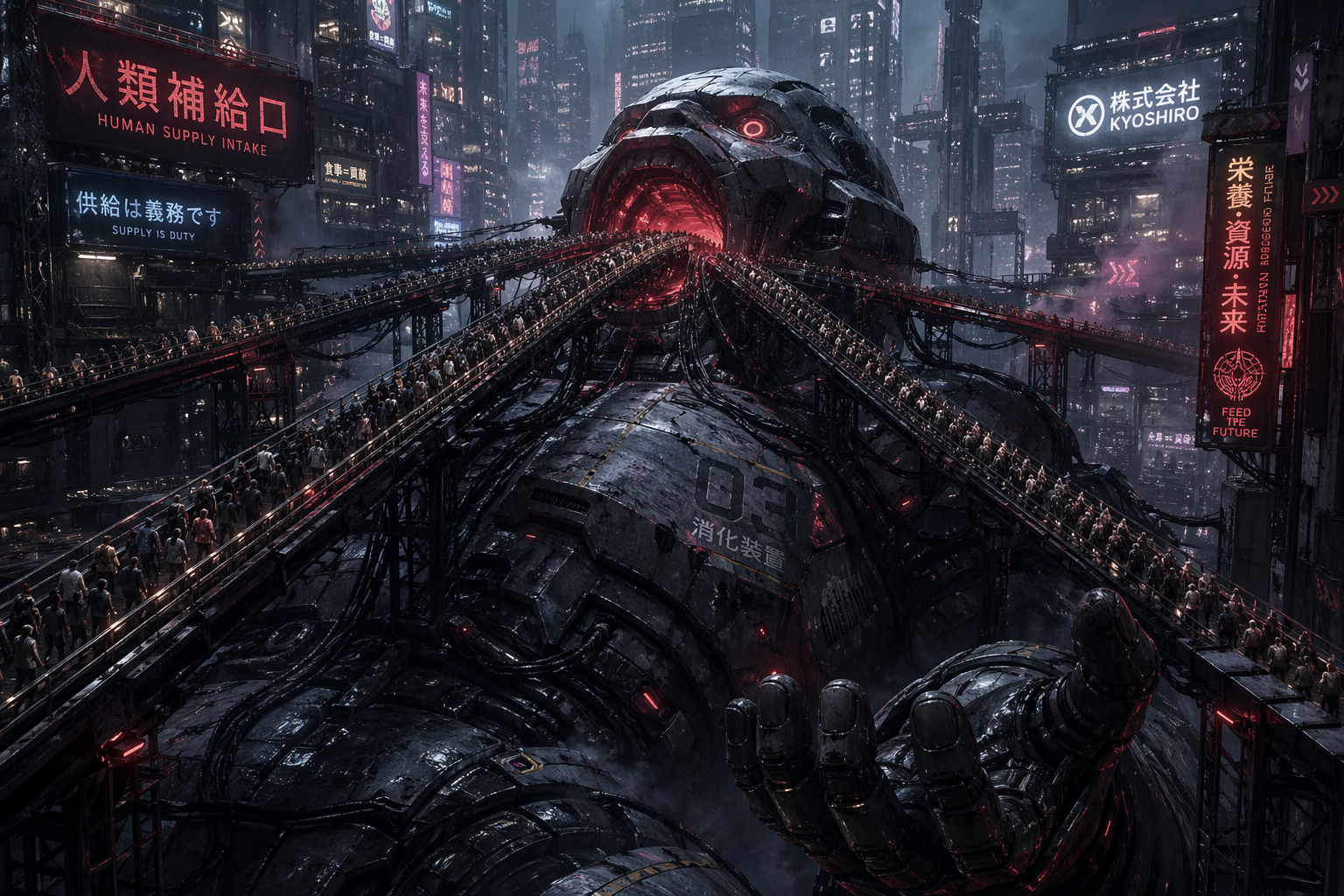

Why is Anthropic making sure you only use Claude Code with your subscription?1 Because at $20 a month, you are the product.2 Their training data did not include a whole lot of real people trying to accomplish real world tasks. Too many shitposts, not enough work. So they’re training on your Claude Code sessions, just like OpenAI is training on your Codex sessions and xAI wants to train on your Cursor sessions, if the deal happens.

Because the web has never had all of the private data about how real business is done. CommonCrawl doesn’t have private communications and systems and processes. But agent harnesses at the big labs have made a damned useful product, that will probably turn out to be the biggest Trojan Horse of all time: you’re helping to train a model to replace you.

I genuinely enjoy making software with agents. But let’s be real that I couldn’t opt out if I wanted to, at least not until I, like every other programmer, has fed it enough data to replace me.

- They were so overzealous in blocking OpenClaw that even invoking

claude -p "prompt"was getting blocked. ↩︎ - And Anthropic are already looking to phase out $20/month as not worth the inference/training ratio, behind a very clumsy “A/B Test” while Github has paused individual plans entirely with tightened limits and no more Opus. ↩︎